From Tracing Execution to Tracing Decisions

In Part 1, we showed why traditional tracing breaks for agentic AI.

Distributed tracing was built to explain pipelines.

Agentic systems behave like decision graphs.

Tracing today tells us where time went.

Agentic systems require us to understand why decisions were made.

That is the core shift behind Tracing 2.0.

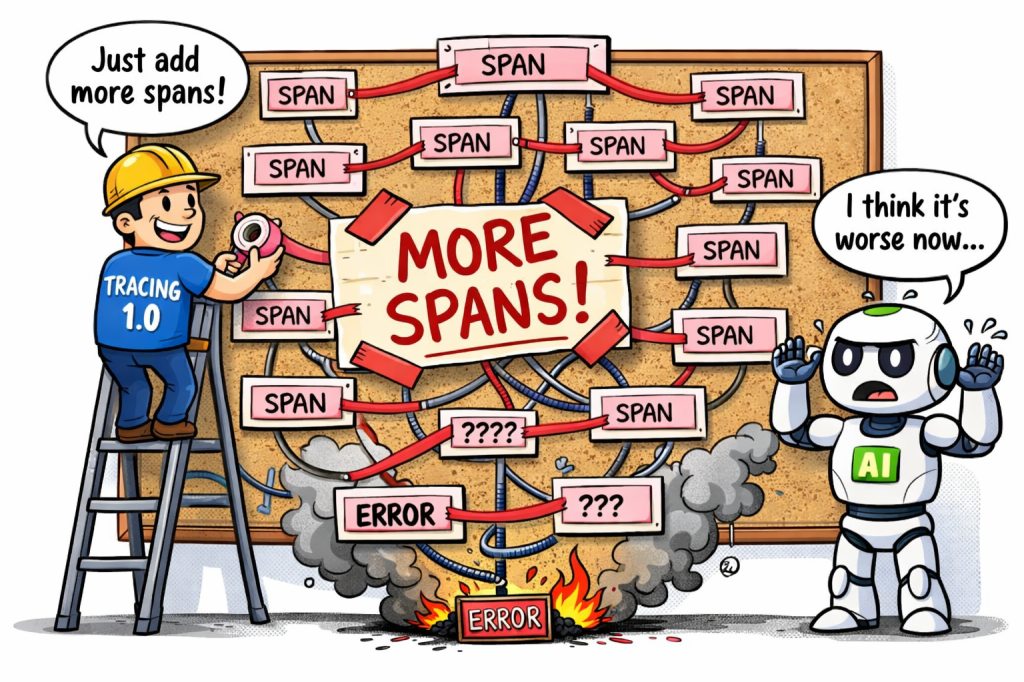

Why “More Spans” Is the Wrong Fix

When observability gaps appear, teams often respond by adding more spans.

This fails because agentic failures are rarely caused by slow execution.

They are caused by bad decisions.

Examples traditional tracing cannot explain:

- The agent chose the wrong plan even though tools worked

- The agent looped despite low latency

- The agent hallucinated despite correct retrieval

- The agent abandoned a valid reasoning path too early

Infrastructure was healthy. Latency was normal. The failure was cognitive, not mechanical.

Tracing 2.0 cannot be an extension of Tracing 1.0.

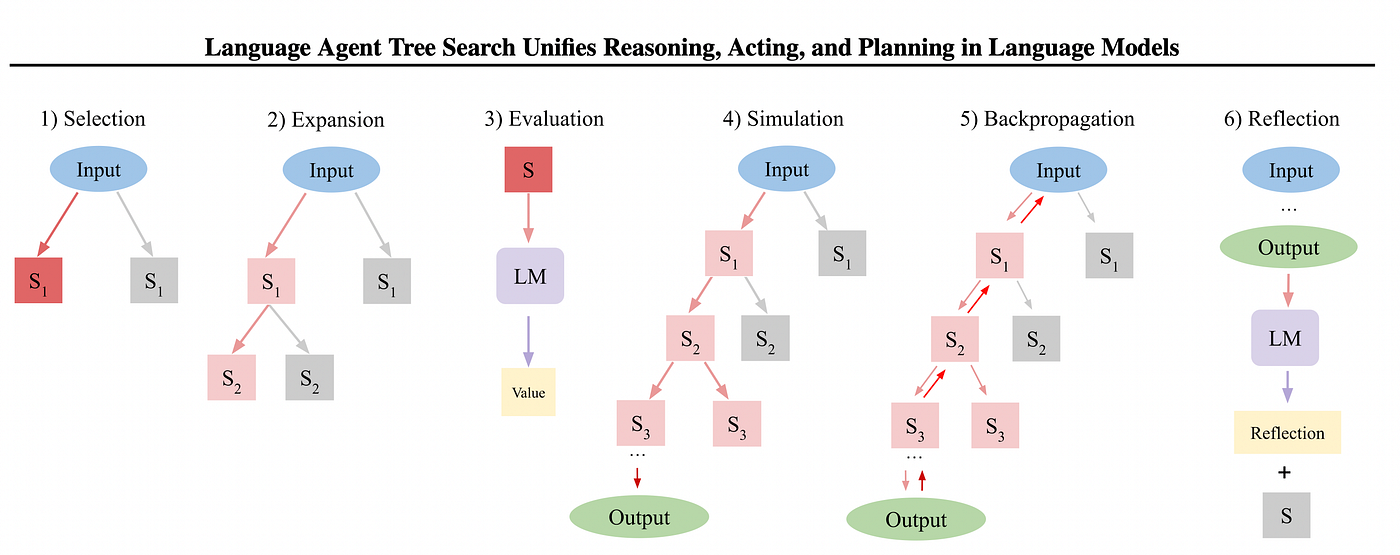

The Core Shift: Execution Traces → Reasoning Traces

Tracing 1.0 records what ran.

Tracing 2.0 must record how decisions evolved.

Instead of a linear timeline, a trace becomes a reasoning graph.

Each node represents:

- an intention

- a hypothesis

- a plan

- a confidence update

Each edge explains:

- why one strategy was chosen

- what evidence influenced the decision

- what alternatives were rejected

This is observability for systems that think, not just execute.

What a Tracing 2.0 “Span” Looks Like

A traditional span answers:

- what ran

- where it ran

- how long it took

A Tracing 2.0 span must answer:

- what the system believed

- why it believed it

- what it chose not to do

Conceptually:

goal: "Identify UI freeze cause"

hypothesis: "Memory pressure"

confidence: 0.37

alternatives: ["CPU saturation", "Network backlog"]

decision: "Deepen memory analysis"

This structure enables explainability, replayability, and regression detection at the decision level — something current observability tools cannot do.

New Observability Questions That Matter

With reasoning-aware traces, teams can finally ask:

- Did the agent choose the optimal strategy?

- Where did confidence inflate without evidence?

- Which decisions increased hallucination risk?

- Which tools correlate with bad outcomes?

These are production observability questions, not research exercises.

Observability Is Becoming a Trust System

In deterministic systems, observability answers:

“What broke?”

In agentic systems, observability must answer:

“Can we trust how this system thinks?”

Tracing 2.0 is not optional.

It is the foundation for safe, debuggable, production AI.

Coming Up Next (Part 3)

Next: How to Detect Reasoning Regressions Before Users Do

Signals, baselines, and confidence envelopes for agentic systems.

Leave a comment